When I first started building out my own AI features in my homelab, I avoided using AI API like the plague—I had always heard how expensive it was.It wasn’t until a few months ago that I realized that both Google and OpenAI offered free access to their APIs with one (not so small) catch.You don’t have to pay Google or OpenAI to use their API You only have to pay for heavy use You might think that API access to AIs is expensive—and you would be right.

But, both Google’s Gemini and OpenAI have free tiers for both of their respective models.Google’s free API access is actually the easiest to get.It’s straightforward, and Google tells you exactly what models are available for free or not.

What Google doesn’t tell you very prominently, however, is what the free usage limit is on those models.It’s a bit more ambiguous than OpenAI, but the models are still free to use at the end of the day.OpenAI, however, has its free API access a bit more hidden—but they’re more open about what all access is actually free.

To get free API access to OpenAI, you simply have to sign up for an OpenAI Platform account (not a ChatGPT account) and then head to the Settings > Data controls area of OpenAI’s Platform.There, you’ll see a few toggles, but the only one that matters is the “Share inputs and outputs with OpenAI” toggle at the bottom.If you enable this, you will get free daily usage for quite a few OpenAI models.

For example, you’ll get 250,000 tokens per day across some of OpenAI’s more powerful models, like GPT-5.2, GPT-5.1-Codex, and more.For the lighter models, like GPT-5.1-Codex-Mini, GPT-5-Mini, GPT-5-Nano, and others, you get up to 2,500,000 tokens per day for free.250,000 tokens for the more complex models, and 2,500,000 tokens for the lighter models might not seem like a lot, but you’d be surprised what you can actually do with that many tokens.

However, it’s worth noting that, if you go over those limits, you’ll start paying for tokens at the current rate of whatever model you’re using.API access to LLMs is actually really useful From Open WebUI to building custom automations, your homelab will benefit So why does free API access matter? I’m glad you asked.With API access to AIs, you can use it however you want—not just in a web chatting application.

For example, n8n can utilize AIs through API for a wide range of tasks.I’ve started to implement AI via API in my n8n for things like content generation, ideation, and more.You can have n8n do something like monitor your website with a ping, and then if it stops responding, AI takes over to try and diagnose what’s going on and sends you a message.

Or, you could set up n8n to use AI to help take an idea you have for a YouTube video, research the idea, and come up with a starter script for the video.There’s a lot that you can do with API access to AI models.Another way to use API access to AI is through Open WebUI.

You might wonder why using Open WebUI would even be necessary with models like GPT-5.2, but there’s actually a really good reason to use your own AI chat interface.With Open WebUI and free access to GPT 5.2, you can use the more powerful OpenAI models without paying for a ChatGPT Plus subscription.This lets you trial (or just straight up use) the better models without having to pay a dime.

Open WebUI also lets you set custom system prompts both for the entire application and per chat.For example, I have a specific chat with a custom system prompt for formatting an outline.I write my articles in Markdown, but do my outlines in markup.

So, I take my markup outline and paste it into the custom chat thread, and Open WebUI takes and strips everything but the top level headers out and then writes the outline using sentence case in Markdown for me.This is something I used to do manually, but with Open WebUI, it takes just a second now.I tried to use normal ChatGPT or Gemini chats for this in the past, but it would often get confused and not give me the code output with Markdown in it as I needed—a custom system prompt fixes that, and there’s no other easy way to do that than with Open WebUI and free API access to ChatGPT AI models.

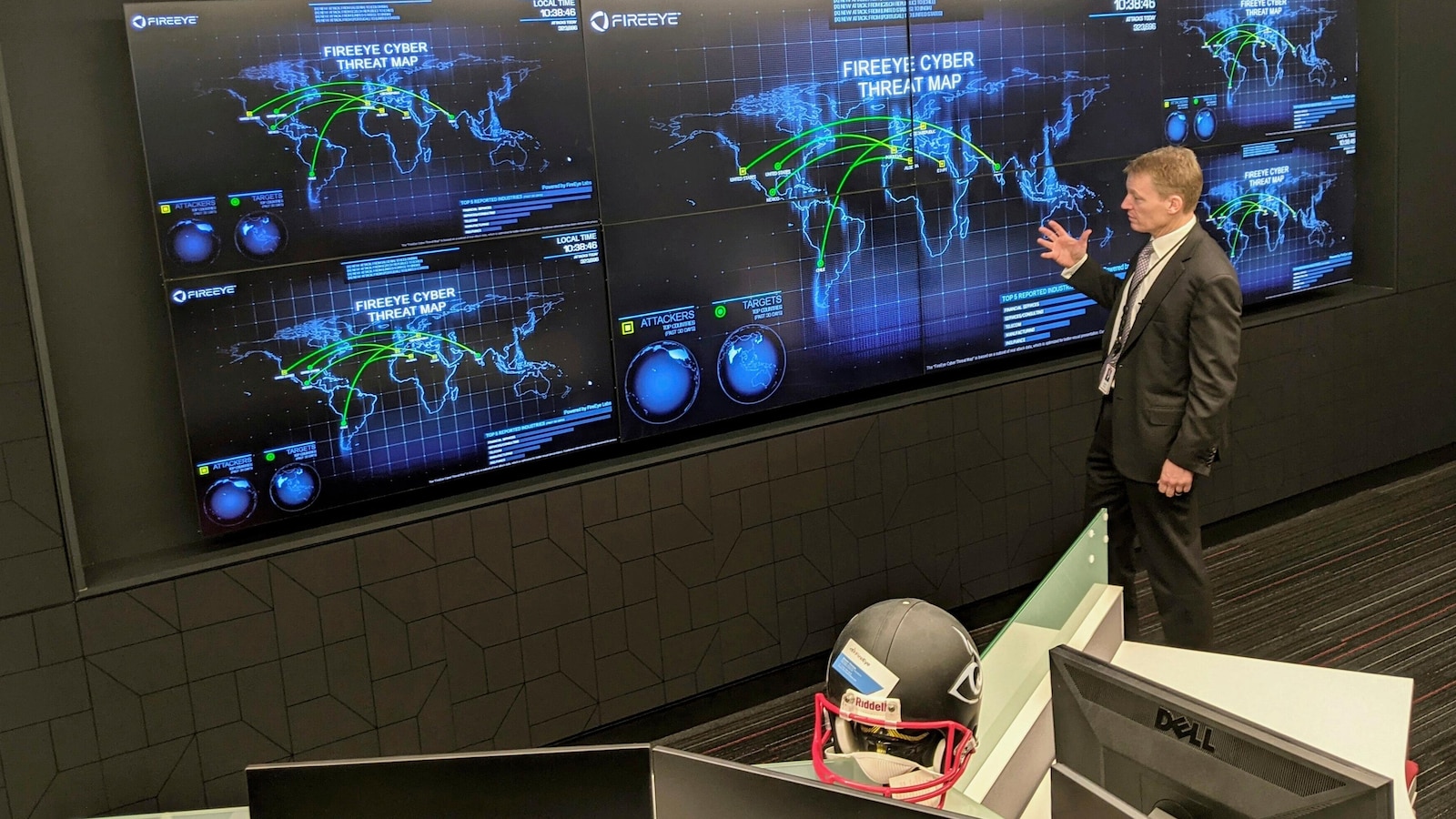

There’s always a catch Your chats aren’t private—but they’re already not private without the API With anything free, there’s always a catch, and free API access to AIs is no exception.Often, with API access to AIs, you can opt not to share your input/output with the AI company.Of course, whatever you send to OpenAI’s API has to hit their servers, but you have the option to disable them storing or using that data for training through the API.

Subscribe for hands-on guides to free AI APIs Want deeper how-to coverage? Subscribe to the newsletter for practical, topic-focused guidance on free AI API access and homelab integration—step-by-step examples, configuration tips, and clear explanations of privacy trade-offs.Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy.You can unsubscribe anytime.

To get API access, though, you have to agree to share that data with OpenAI.That might be a dealbreaker for you, and I get it, but let me lay everything on the table here: your chats aren't private.When you use the ChatGPT or Gemini web apps, the data shared there is already used for training and data retention purposes.

So, if you’re fine using the web apps, then you should be fine using the API and sharing the input/output just the same.Of course, at any moment that you want to stop sharing, you just toggle it and start paying for API usage.It’s nice that it’s an option you can turn on and off at will.

OpenAI even lets you choose whether you want to share just one project or all projects with them, but only the shared project will have free API access.Nothing in life is truly free—but that’s okay At the end of the day, free API access to AI models is never going to be truly free.However, I think that sharing the input/output of the chats via API is fine for my use case, as well as most people’s use case for this.

If you really want privacy, then you’ll simply have to pay for it.

Read More