Using a Large Language Model (LLM) with Home Assistant has a number of benefits.It can add natural language understanding, power your voice assistant, and even analyze images.A local LLM can help maintain privacy, but you don't always need to use the largest model.

Related I self-host my own private ChatGPT with this tool Running your own AI isn't only easy, it is a lot more cost effective if you already have a gaming PC.Posts 2 By Nick Lewis Using a local LLM with Home Assistant Keep your data private There are plenty of ways that an LLM can make Home Assistant more powerful.One popular usage is to hook up a cloud-based model from a company such as OpenAI and use it as a conversation assistant for the Assist voice assistant.

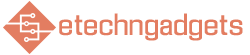

This allows you to use natural language commands to control your smart home, rather than having to remember the specific phrases that will turn your lights on and off.The trouble with using a cloud-based LLM is that data about your smart home has to be sent to the cloud to be processed.It means that information about your smart home ends up on third-party servers.

Home Assistant was designed to help maintain your privacy, so sharing information about your smart home with AI companies goes against this core principle.One solution is to use a local LLM.You can run models on your own hardware that can perform some of the same tasks that cloud-based LLMs can do.

How quickly or accurately a local LLM can perform these tasks depends both on the hardware you run it on and the models that you use.Why the biggest model isn't always the best Finding the sweet spot LLMs often come in different sizes.You might see versions of the same model with values such as 4B, 9B, 70B.

These refer to the number of parameters the model has; a 70B model has 70 billion parameters, for example.These larger models often have more capacity for knowledge and reasoning.The flip side is that the more parameters a model has, the more VRAM is needed to store those parameters.

Some 70B models, for example, might need more than 100 GB of VRAM to run.That's beyond the reach of even high-end consumer GPUs unless you're running a multi-GPU stack, and if you don't have enough VRAM, the model won't run at all or will slow to a crawl.The challenge is finding a model that's small enough to run on your hardware, but powerful enough to handle the jobs that you want it to do.

There are some useful tools, such as llmfit, that can tell you which models are best suited to run on your hardware.The good news is that, as the technology has developed, new smaller models have appeared that can outperform the very large models from just a few years ago.You no longer need to have insane amounts of VRAM to get decent performance from a local LLM.

Small local LLMs can run on basic hardware You don't need an expensive GPU If you don't have a dedicated GPU, it's not the end of the world.There are some smaller models that are capable of running on a CPU alone without needing to pass anything off to a GPU.These models can use the system RAM in your PC rather than fitting everything into VRAM.

While the performance can't match larger models running with a GPU, they can still do a job.I wanted to use a local LLM on my Beelink Mini PC that has 16 GB of RAM and no dedicated GPU.My main purpose was to take a list of events from my calendar and transform it into the text for a spoken morning briefing.

I'd seen a lot of people saying they'd found the Qwen 3.5 4B model to be a good sweet spot, so I decided to give it a try.Using this model, I was able to generate the briefing, although it took around 13 seconds to generate.The text was fine, but it wasn't particularly inspiring.

In an effort to speed things up, I tried a smaller model, Llama 3.2 3B, which uses fewer parameters.You might expect this model to produce a worse result, but it produced a much more natural-sounding output, and did it in under 6 seconds, less than half the time of the other model.It seems that size isn't everything.

The largest model you can run isn't always the best choice; using a smaller model can be faster and may even give you better results.Beelink S13 PRO CPU Celeron FCBGA1264 3.6GHz Graphics Integrated Intel Graphics 24EUs 1000MHz The Beelink Mini S13 Pro desktop PC is a ultra-compact computer powered by the Intel N150 processor.Shipping with 16GB of DDR4 RAM and a 500GB SSD, this micro desktop is perfect for a variety of workloads.

From running simple server programs to replacing your old PC, the Beelink S13 Pro is up to the task. Memory 16 GB DDR4 Storage 500GB Operating System Windows 11 Home Dimension 4.52 x 4 x 1.54 inches USB Ports 4 $229 at Amazon Expand Collapse A small local LLM isn't suitable for everything It will struggle as a conversation agent On a whim, I tried to see if I could use either of these models as conversation agents for Assist.This would let me use natural language voice commands with Assist, instead of having to use specific phrases.Subscribe for practical local LLM and Home Assistant tips Get the newsletter for actionable local LLM and Home Assistant guidance—model recommendations, privacy-minded setup tips, and practical hardware workarounds to run small models at home.

Subscribe to try them yourself.Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy.You can unsubscribe anytime.

As expected, both models failed miserably at this.On my hardware, there was too much context for these smaller models to process quickly, and it would take more than 20 seconds for my lights to turn on, which just wasn't usable.If you're running your local LLM without powerful hardware, it won't be suitable for every job, as it will either be too slow or incapable of doing what you want.

For some jobs, such as generating my morning briefings, however, a local LLM is a perfect way to get the results I want while maintaining my privacy.Give a local LLM a try If you've put off trying a local LLM because you didn't think your hardware could handle it, it's worth seeing what a small local model can do.Until I win the lottery and can afford a powerful AI rig, these small models will do just fine.

Read More