Summary curl is a ubiquitous CLI "browser" that fetches raw web responses (HTTP, FTP, SMTP, etc.).Pipe curl into grep/sed/awk or cron to automate data extraction, monitoring, and installs.Use curl to download/upload files, follow redirects, resume transfers, and handle auth.

Client URL or cURL is a powerful command-line utility that's pre-installed on pretty much every modern computer (whether its Windows, macOS, or Linux).Think of curl as a web browser that lives in the terminal and works with raw text.This idea sounds pretty simple, but it's also why curl is omnipresent in modern tech.

Even though modern alternatives exist (like wget), curl is not going anywhere.If you spend some time working with Linux, you will inevitably end up running a curl command.What is curl Normally, when you browse the internet, you do so with an internet browser.

You launch the browser window, type in an address or query, and the browser renders the website for you.Rendering, in this context, means that the browser interprets or "processes" the code it receives into a visual and interactive page.That's how you get a colorful, styled web page, which you can scroll through and click.

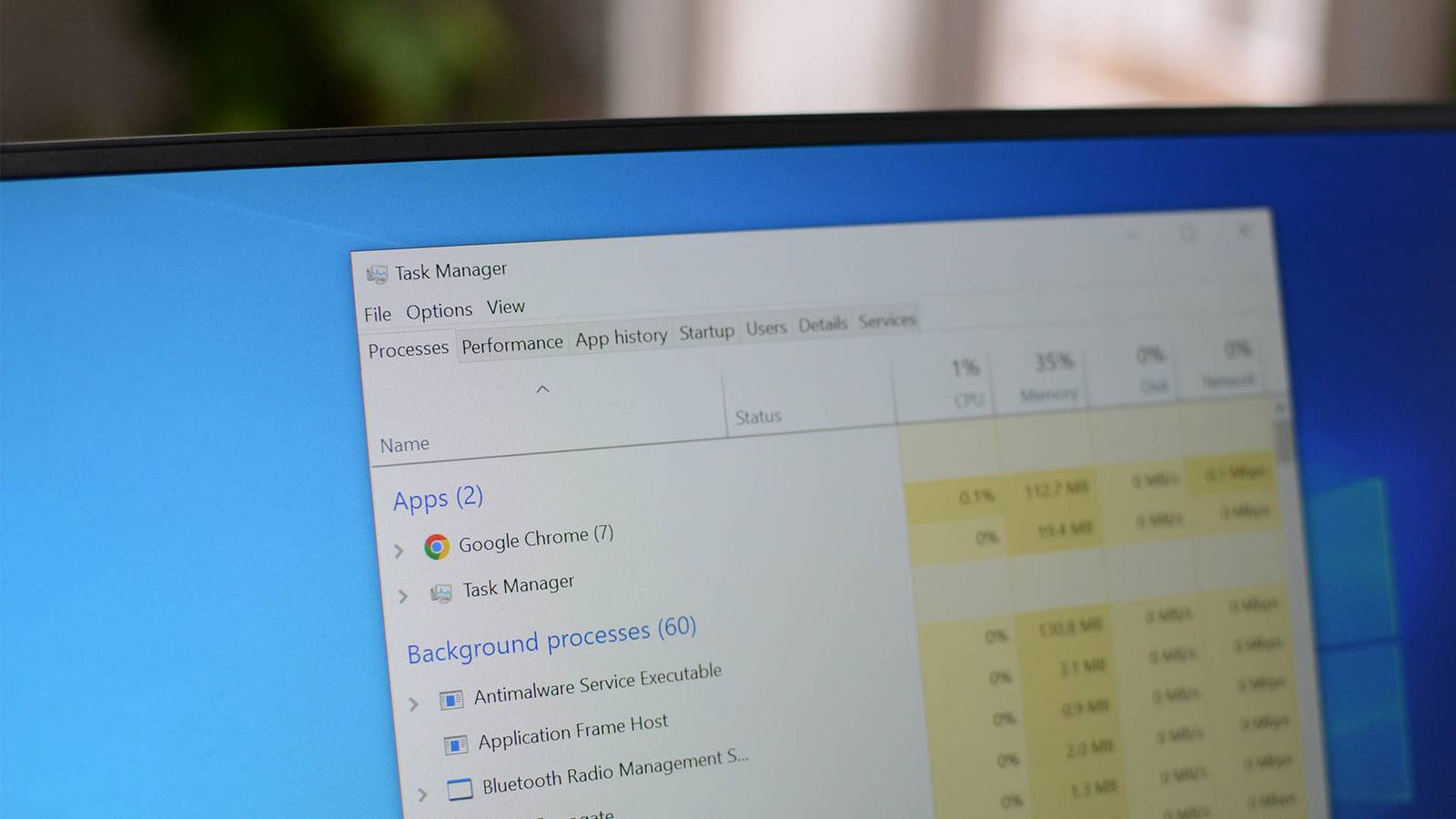

Since they have to render code, browsers tend to be heavy on resources.The curl utility is a kind of browser too, except it doesn't render the code.When you request a website through curl, it just displays the raw code in text format within the terminal window.

For example, if you try to load https://www.google.com with curl, you'll get the actual HTML code that makes up the website.What can you do with curl But what's the point of printing this raw code in the terminal? It seems useless if you're thinking about it as a consumer or visitor.However, that raw information can be incredibly useful if you think about it in terms of automation or data extraction.

Linux has powerful CLI tools for processing text output.You could "pipe" the curl output to grep, sed, or awk and get useful information.You could chain a curl command with these tools to get stock information, weather reports, find discount codes, monitor price drops, and so on.

Whatever info you can see on a website, it's technically possible to extract it using curl.You might have already used curl to run installer scripts from GitHub.They pipe the content of the shell script and execute it with Bash.

Those curl commands are usually operating system agnostic and make it super simple to install a program on any target OS.For example, you can install Ollama (an app for running LLM models locally) with a simple curl command.curl -fsSL https://ollama.com | sh This command shows you weather forecasts for your current location.

curl -s https://wttr.in You can get your public IP address with this curl command.curl ifconfig.me To get crypto prices, you can run this.curl rate.sx/btc You can make powerful shell scripts with curl What actually makes curl powerful is that you can pipe its output to other Linux tools or include it in shell scripts to automate things.

Any of these outputs could be part of a custom shell script depending on what you're trying to accomplish.The curl command to get crypto could be combined with cron to send you price change alerts (curl can also send notifications).For web developers, curl is useful because it shows them the actual status of their websites.

A browser might say "something went wrong" when a site fails to load.With curl, you can see the actual status codes on top of the text dump (like 200 OK or 500 Server Error.) A simple shell script that uses curl and cron can automatically check this status at regular intervals and send alerts if the website goes down.It can even save the output into a daily report log.

You can download and upload content with curl In addition to the raw text output, curl can also be used for downloading content from the web.You can plug in the download URL followed by curl -o, and it'll download the file to your current directory.If a link automatically redirects to a different page to download some content, you can plug in the original URL and curl will automatically follow it to the download page.

If a download gets interrupted, curl lets resume it from the last byte received.Occasionally, a website will want you to login before giving you a download link.With curl, you can plug in that session cookie and the download URL to save the file.

Subscribe for Practical curl Tips and Shell Recipes Get the newsletter for hands-on curl guidance, ready-to-use shell scripts, and practical automation examples that help you extract data, download files, and interact with servers — plus coverage of related developer tooling.Get Updates By subscribing, you agree to receive newsletter and marketing emails, and accept our Terms of Use and Privacy Policy.You can unsubscribe anytime.

You're not limited to HTTP/HTTPS either.Using curl, you can also download files from FTP, SSH, and SMB connections.It even supports IMAP/POP3/SMTP protocols, meaning you can download and send emails.

You could write a shell command that downloads all your emails from a mail client and archives them in raw format.The curl toolkit is a must-have for any power user or developer.You can use it to extract useful data from websites, download or upload files, and interact with servers directly (ask for data or submit info.) Since it's a command-line tool, you can pass off its output to other Linux tools and create automated pipelines or shell scripts.

Read More